Background

Big Data has been a hot topic over the last few years. Big Data on public clouds, such as AWS’s Elastic MapReduce, has been gaining even more popularity as cloud computing becomes more of an industry standard.

R is an open source project for statistical computing and graphics. It has been growing in popularity for doing linear and nonlinear modeling, classical statistical tests, time-series analysis and others, at various Universities and companies.

RHadoop was developed by Revolution Analytics to interface with Hadoop. Revolution Analytics builds analytic software solutions using R.

AppScale is an open source PaaS that implements the Google AppEngine API on IaaS environments. One of the Google AppEngine APIs that is implemented is AppEngine MapReduce. The back-end support for this API that AppScale using Cloudera’s Distribution for Apache Hadoop.

Ansible is an open source orchestration software that utilizes SSH for handling configuration management for physical/virtual machines, and machines running in the cloud.

Amazon Web Services is a public IaaS that provides infrastructure and application services in the cloud. Eucalyptus is an open source software solution that provides the AWS APIs for EC2, S3, and IAM for on-premise cloud environments.

This blog entry will cover how to deploy AppScale (either on AWS or Eucalyptus), then use Ansible to configure each AppScale node with R, and the RHadoop packages in order allow programs written in R to utilize MapReduce in the cloud.

Pre-requisites

To get started, the following is needed on a desktop/laptop computer:

- AppScale Tools installed.

- Ansible installed.

- The following AWS/Eucalyptus variables exported as global variables in your shell:

- EC2_ACCESS_KEY

- EC2_SECRET_KEY

- EC2_URL

*NOTE: These variables are used by AppScale Tools version 1.6.9. Check the AWS and Eucalyptus documentation regarding obtaining user credentials.

Deployment

AppScale

After installing AppScale Tools and Ansible, the AppScale cluster needs to be deployed. After defining the AWS/Eucalyptus variables, initialize the creation of the AppScale cluster configuration file – AppScalefile.

$ ./appscale-tools/bin/appscale init cloudEdit the AppScalefile, providing information for the keypair, security group, and AppScale AMI/EMI. The keypair and security group do not need to be pre-created. AppScale will handle this. The AppScale AMI on AWS (us-east-1) is ami-4e472227. The Eucalyptus EMI will be unique based upon the Eucalyptus cloud that is being used. In this example, the AWS AppScale AMI will be used, and the AppScale cluster size will be 3 nodes. Here is the example AppScalefile:

--- group : 'appscale-rmr' infrastructure : 'ec2' instance_type : 'm1.large' keyname : 'appscale-rmr' machine : 'ami-4e472227' max : 3 min : 3 table : 'hypertable'

After editing the AppScalefile, start up the AppScale cluster by running the following command:

$ ./appscale-tools/bin/appscale upOnce the cluster finishes setting up, the status of the cluster can be seen by running the command below:

$ ./appscale-tools/bin/appscale statusR, RHadoop Installation Using Ansible

Now that the cluster is up and running, grab the Ansible playbook for installing R, and RHadoop rmr2 and rhdfs packages onto the AppScale nodes. The playbook can be downloaded from github using git:

$ git clone https://github.com/hspencer77/ansible-r-appscale-playbook.gitAfter downloading the playbook, the ansible-r-appscale-playbook/production file needs to be populated with the information of the AppScale cluster. Grab the cluster node information by running the following command:

$ ./appscale-tools/bin/appscale status | grep amazon | grep Status | awk '{print $5}' | cut -d ":" -f 1

ec2-50-17-96-162.compute-1.amazonaws.com

ec2-50-19-45-193.compute-1.amazonaws.com

ec2-67-202-23-157.compute-1.amazonaws.comAdd those DNS entries to the ansible-r-appscale-playbook/production file. After editing, the file will look like the following:

[appscale-nodes]

ec2-50-17-96-162.compute-1.amazonaws.com

ec2-50-19-45-193.compute-1.amazonaws.com

ec2-67-202-23-157.compute-1.amazonaws.comNow the playbook can be executed. The playbook requires the SSH private key to the nodes. This key will be located under the ~/.appscale folder. In this example, the key file is named appscale-rmr.key. To execute the playbook, run the following command:

$ ansible-playbook -i r-appscale-deployment/production

--private-key=~/.appscale/appscale-rmr.key -v r-appscale-deployment/site.ymlTesting Out The Deployment – Wordcount.R

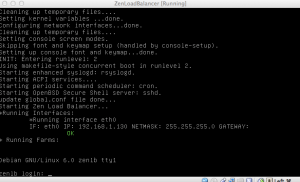

Once the playbook has finished running, the AppScale cluster is now ready to be used. To test out the setup, SSH into the head node of the AppScale cluster. To find out the head node of the cluster, execute the following command:

$ ./appscale-tools/bin/appscale statusAfter discovering the head node, SSH into the head node using the private key located in the ~/.appscale directory:

$ ssh -i ~/.appscale/appscale-rmr.key root@ec2-50-17-96-162.compute-1.amazonaws.comTo test out the R setup on all the nodes, grab the wordcount.R program:

root@appscale-image0:~# tar zxf rmr2_2.0.2.tar.gz rmr2/tests/wordcount.RIn the wordcount.R file, the following lines are present

rmr2:::hdfs.put("/etc/passwd", "/tmp/wordcount-test")

out.hadoop = from.dfs(wordcount("/tmp/wordcount-test", pattern = " +"))

When the wordcount.R program is executed, it will grab the /etc/password file from the head node, copy it to the hdfs filesystem, then run wordcount on /etc/password to look for the pattern ” +”. NOTE: wordcount.R can be edited to use any file and pattern desired.

Run wordcount.R:

root@appscale-image0:~# R

R version 2.15.3 (2013-03-01) -- "Security Blanket"

Copyright (C) 2013 The R Foundation for Statistical Computing

ISBN 3-900051-07-0

Platform: x86_64-pc-linux-gnu (64-bit)

R is free software and comes with ABSOLUTELY NO WARRANTY.

You are welcome to redistribute it under certain conditions.

Type 'license()' or 'licence()' for distribution details.

Natural language support but running in an English locale

R is a collaborative project with many contributors.

Type 'contributors()' for more information and

'citation()' on how to cite R or R packages in publications.

Type 'demo()' for some demos, 'help()' for on-line help, or

'help.start()' for an HTML browser interface to help.

Type 'q()' to quit R.

[Previously saved workspace restored]

> source('rmr2/tests/wordcount.R')

Loading required package: Rcpp

Loading required package: RJSONIO

Loading required package: digest

Loading required package: functional

Loading required package: stringr

Loading required package: plyr

13/04/05 02:33:41 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

13/04/05 02:33:43 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

packageJobJar: [/tmp/RtmprcYtsu/rmr-local-env19811a7afd54, /tmp/RtmprcYtsu/rmr-global-env1981646cf288, /tmp/RtmprcYtsu/rmr-streaming-map198150b6ff60, /tmp/RtmprcYtsu/rmr-streaming-reduce198177b3496f, /tmp/RtmprcYtsu/rmr-streaming-combine19813f7ea210, /var/appscale/hadoop/hadoop-unjar5632722635192578728/] [] /tmp/streamjob8198423737782283790.jar tmpDir=null

13/04/05 02:33:44 WARN snappy.LoadSnappy: Snappy native library is available

13/04/05 02:33:44 INFO util.NativeCodeLoader: Loaded the native-hadoop library

13/04/05 02:33:44 INFO snappy.LoadSnappy: Snappy native library loaded

13/04/05 02:33:44 INFO mapred.FileInputFormat: Total input paths to process : 1

13/04/05 02:33:44 INFO streaming.StreamJob: getLocalDirs(): [/var/appscale/hadoop/mapred/local]

13/04/05 02:33:44 INFO streaming.StreamJob: Running job: job_201304042111_0015

13/04/05 02:33:44 INFO streaming.StreamJob: To kill this job, run:

13/04/05 02:33:44 INFO streaming.StreamJob: /root/appscale/AppDB/hadoop-0.20.2-cdh3u3/bin/hadoop job -Dmapred.job.tracker=10.77.33.247:9001 -kill job_201304042111_0015

13/04/05 02:33:44 INFO streaming.StreamJob: Tracking URL: http://appscale-image0:50030/jobdetails.jsp?jobid=job_201304042111_0015

13/04/05 02:33:45 INFO streaming.StreamJob: map 0% reduce 0%

13/04/05 02:33:51 INFO streaming.StreamJob: map 50% reduce 0%

13/04/05 02:33:52 INFO streaming.StreamJob: map 100% reduce 0%

13/04/05 02:33:59 INFO streaming.StreamJob: map 100% reduce 33%

13/04/05 02:34:02 INFO streaming.StreamJob: map 100% reduce 100%

13/04/05 02:34:04 INFO streaming.StreamJob: Job complete: job_201304042111_0015

13/04/05 02:34:04 INFO streaming.StreamJob: Output: /tmp/RtmprcYtsu/file1981524ee1a3

13/04/05 02:34:05 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

13/04/05 02:34:07 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

13/04/05 02:34:08 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

13/04/05 02:34:10 INFO security.UserGroupInformation: JAAS Configuration already set up for Hadoop, not re-installing.

Deleted hdfs://10.77.33.247:9000/tmp/wordcount-test

>quit("yes")Thats it! The AppScale cluster is ready for additional R programs that utilize MapReduce. Enjoy the world of Big Data on public/private IaaS.